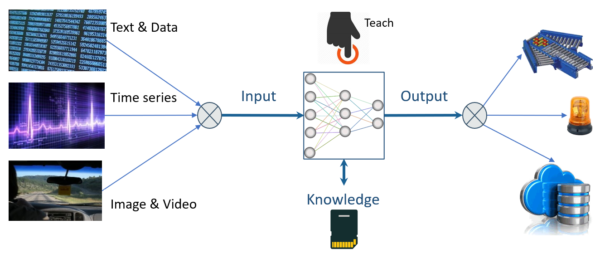

Real Intelligence at the edge

with NeuroMem (Neuromorphic Memories)NeuroMem neural network – Trainable, Responsible, Explainable and Scalable

- Adaptive learning on the spot

- Built-in weight correction

- Highly scalable capacity

- Blazing speed performance

- Low power consumption

- Knowledge traceability

- Simple I/Os for easy integration

NeuroMem for Smart Sensors

![]()

Power your sensors with edge intelligence. Whether on an industrial machinery, under the hood of a vehicle, or around a wrist, the ability to learn and classify patterns coming directly from a sensor is game-changing.

NeuroMem for Smart Storage

![]()

Turn your storage devices into local, secure and configurable engines to analyze your data. Whether it is text, audio or image data, comprehend content or detect novelty without moving your data.

A technology already in action

NeuroMem in a Factory 4.0 system in a Russian steel furnace

NeuroTechnologijos installs a NeuroMem-powered monitoring system in a steel blaster furnace in Magnitogorsk - Russia. Its solution is composed of a bank of NT Adaptive Controllers designed and manufactured by NeuroTechnologijos and mounted in an industrial enclosure....

Neurons inspect fishes in the North Sea

Pisces Fish Machinery Inc. has developed and sold over 50 smart cameras powered by NeuroMem neurons to inspect fishes directly on the fileting lines on-board of fishing vessels. At the beginning of a new expedition, the fishermen perform the training of the neurons...

Affordable in-line inspection of solar glass

Surface inspection systems do not have to be a big investment in term of budget, resources and time. General Vision has developed an AI camera powered by a NeuroMem network to detect defects and which can be assembled in-line with other identical cameras to cover any...